Articles in the series “Exchange forensics: The mysterious case of ghost mail”: [1] [2] [3]

[Note: This is a fiction story, the characters and situations are not real, the only real thing is the technical part, which is based on a mixture of work done, experiences of other colleagues and research carried out. with the same technical dose but with less narrative, you can consult the video of the talk that the author gave at the 11th STIC Conference of the CCN-CERT here]

After a sleepless night (tossing and turning, brooding on the incident and trying to understand what may have happened, what we may have overlooked, what we still need to try), we return loaded with caffeine to the Organization.

Autopsy has finished the processing of the hard disk image, but after a superficial analysis of the results our initial theory is confirmed: the user’s computer is clean. In fact, it is so clean that the malicious email did not even touch that computer. Therefore, it is confirmed that everything that happened must have happened in the Exchange.

We keep thinking about the incident, and there is something that irks us: if the attackers had complete control of the Exchange, they could have deleted the mail from the Recoverable Items folder, which they didn’t. But what they did manage was to erase it from the EventHistoryDB table, which operates at a lower level … or perhaps they didn’t either.

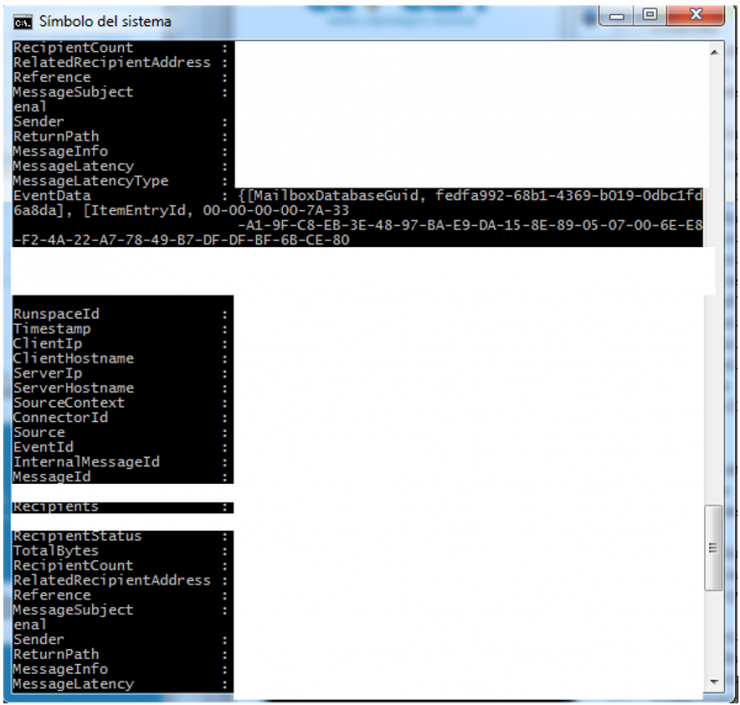

We go to the Systems area with croissants (free food opens many doors), and we all sit down with the Exchange administrator to review all the executed commands with him. And as soon as we see him execute the first command we understand what is happening. The output of the Powershell command is something similar to this (what is not necessary is hidden in the blank):

If you look carefully, the ItemEntryID is a fairly long value separated by dashes, which makes the value occupy several lines on a Windows terminal screen of 80 columns. We had seen the problem of the scripts and we had it controlled, but in the Message Tracking logs the ItemEntryID (there without scripts) always fit in a line. And when we look for chains in logs, fgrep is a great tool that does exactly what you tell it, so if you ask it to look for a complete chain that is in several lines … it will not find it.

After a streak of swear words that would make a Marseillais dockworker blush, invocations of Lovecraft primitives and multiple blasphemies, we repeat the search. Now we are talking. This time we do find the damn ItemEntryID in the EventHistoryDB like a smoking gun, and it confesses what happened in great detail:

- An email object was created at t = 0 (ObjectCreated action).

- In t + 5 minutes the email was sent (MailSubmitted action).

- At t + 6 the mail was deleted from the mailbox (ObjectDeleted action).

And most importantly: all these actions were carried out through OWA. Didn’t we agree that OWA had restricted access by VPN for which the user did not have access credentials? We need to have some serious words with that OWA…

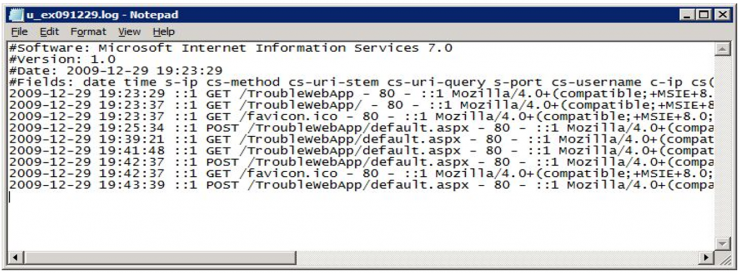

We request the logs of the two CAS nodes (Client Access Server) of the cluster from Systems, which are those that deploy the OWA service. Half an hour later they give us 200Mb of logs in text format of the last 72h, with a format similar to this one (if they seem very similar to those of IIS, it is because CAS actually provides web services through an IIS):

Since we have a reasonably structured log, we can start applying the “stripping” technique: we start removing fields that do not add value and we eliminate those that are known. In this case, the technique works wonders because 5 minutes later Maria exclaims: ‘Firefox? Why so many Firefox entries, if we do not have it deployed in the Organization?’.

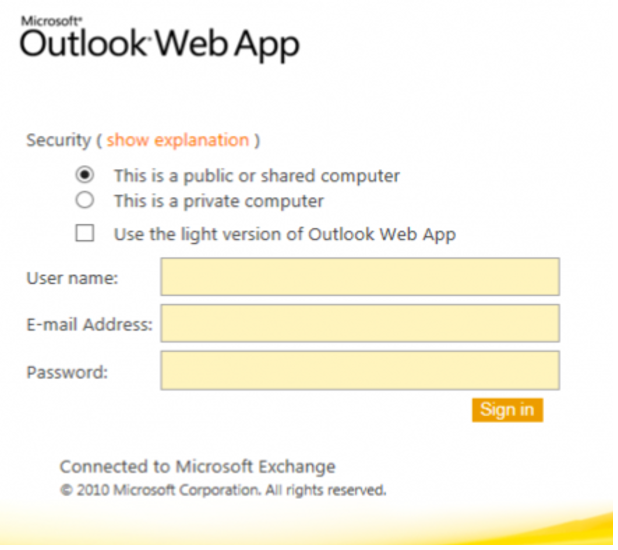

Users tend to be animals of habit: if they use Internet Explorer and Chrome at work, it is more than likely that they use the same thing at home. If we pivot User-Agent in the logs on this, we hit the jackpot: a public IP address of the Organization that is NOT the VPN access. If we access that IP address with a browser, the pieces start to fit in because it shows what we expected:

The question is simple: What does an OWA do in a public IP other than the one with the VPN access? After consulting several times with Systems and Networks, we finally solve the mistery: it is the IP corresponding to the access for mobile phones.

When the Systems department set up the Exchange, they took into account that the access to the OWA had to be protected, and for that reason it was published using an internal IP that was only accessed by the VPN.

However, at the time of giving the mobile mail service they did not take into account that ActiveSync and OWA (which apparently are installed by default) use the same port, so by having open access to mobile phones they were simultaneously allowing the WHOLE Organization access to OWA.

The party does not end there: pivoting on the public address subnet, we find ANOTHER public IP address (the backup of mobile access) … and we also discover accessess from both IP addresses to three other user accounts different from our patient zero. Bingo, ladies and gentlemen!

Once the incident is really identified, we quickly move on to the containment phase. We cannot close mobile access as such (we have to keep the service active), but thanks to $deity the firewalls of the Organization have enough studies to know how to write and set URI filters. We generate a quick dictionary of terms to prohibit using URI, based on the logs and a couple of Internet searches, and we pass it to the Perimeter Security department so they can deploy it in both accesses.

The following step is to force the password change of all compromised users (previously making sure that the password policy is strong: 12 characters minimum, with requirements of complexity and monthly expiration).

Once the doors have been closed to the attackers, we move on to the eradication phase: we collect evidence from the computers of the other three compromised users, and analyse them for any malicious behaviour. We also check all the emails sent in the last few days in the Exchange server, to verify that no more malicious emails have been sent.

However, we still have some issues to solve. My partner shows me the logs of the OWA, and points out a few lines. What’s in those logs, and the conclusion of the mystery, will be revealed in the last chapter…